Big data, data analytics, data governance, data visualization, data integration and more. Discover these key data terms for a deeper understanding. In today’s data-driven world, it’s essential to be familiar with key data terms to effectively navigate and make sense of the vast amounts of information available. Here are 15 important data terms to know: […]

Big data, data analytics, data governance, data visualization, data integration and more. Discover these key data terms for a deeper understanding.

In today’s data-driven world, it’s essential to be familiar with key data terms to effectively navigate and make sense of the vast amounts of information available. Here are 15 important data terms to know:

Big data

Large and complicated data sets that are difficult to manage, process or analyze using conventional data processing techniques are referred to as “big data.“ Big data includes data with high volume, velocity and variety. Massive amounts of structured and unstructured data generally comes from various sources, including social media, sensors, gadgets and internet platforms.

Big data analytics involves methods and tools to collect, organize, manage and analyze these vast data sets to identify important trends, patterns and insights that can guide business decisions, innovation, and tactics.

DevOps

DevOps, short for development and operations, is a collaborative approach to software development and deployment that emphasizes communication, collaboration, and integration between development and operations teams.

It attempts to boost efficiency, improve overall product quality and streamline the software delivery process. To automate and enhance the software development lifecycle, DevOps integrates methods, tools and cultural beliefs. It encourages close communication between programmers, system administrators, and other parties involved in creating and deploying new software.

Continuous integration, delivery and deployment are key concepts in DevOps, where code changes are constantly merged and tested to produce quicker, more reliable software releases. It also incorporates infrastructure automation, monitoring, and feedback loops to ensure rapid response and continual improvement.

Which provides more value?

1. Backend

2. Frontend

3. DevOps— Programmer Memes ~ (@iammemeloper) May 22, 2023

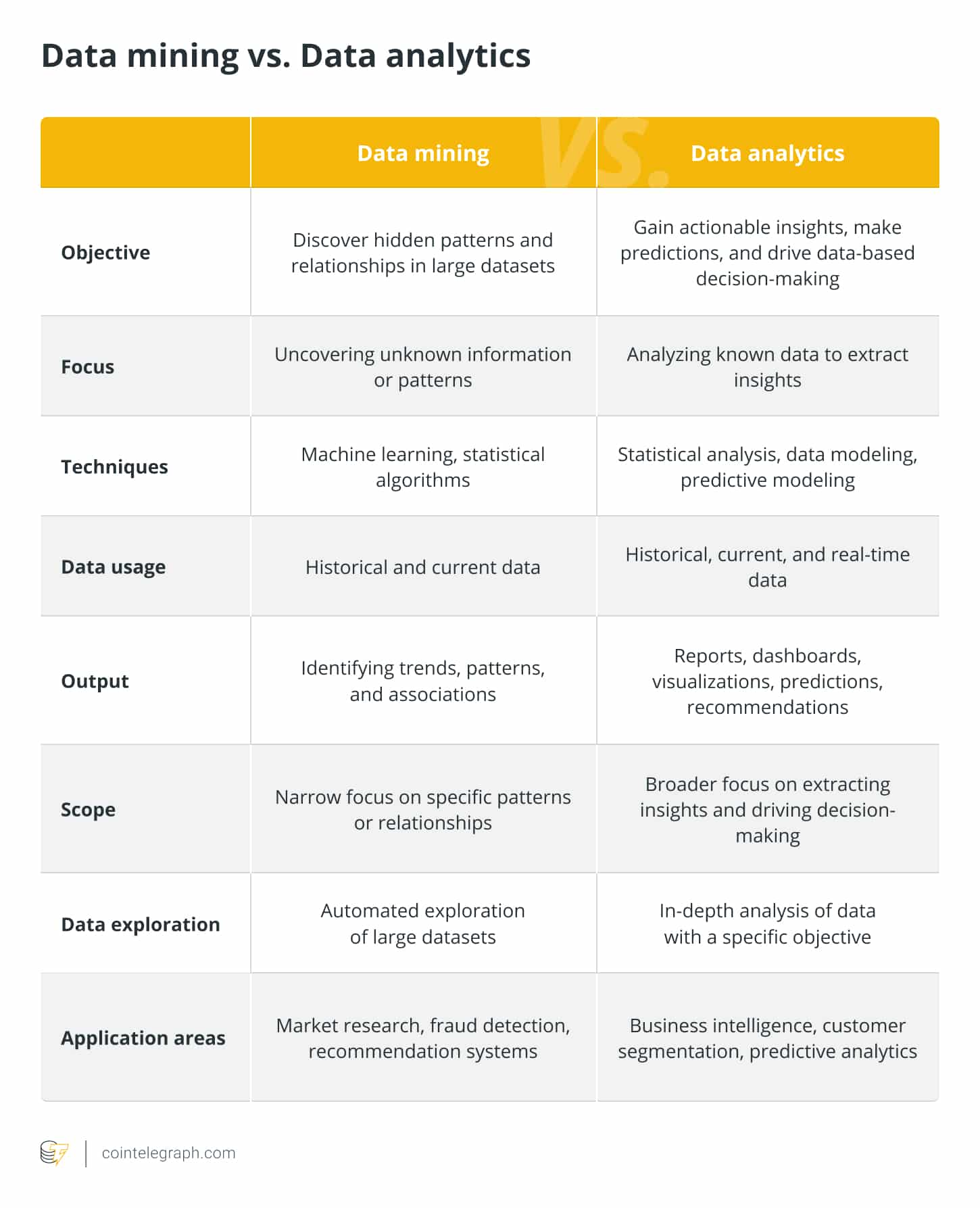

Data mining

Data mining is the extraction of useful patterns, information or insights from massive databases. Making informed decisions or predictions requires evaluating and spotting hidden patterns, correlations or trends in the data. Clustering, classification, regression, association rule mining and other techniques are data mining examples.

Related: 7 free learning resources to land top data science jobs

Data analytics

Data analytics is the process of exploring, interpreting and analyzing data to find significant trends, patterns, and insights. To extract useful information from large data sets, it employs a variety of statistical and analytical tools, empowering businesses to make data-driven decisions.

While data analytics involves studying and interpreting data to obtain insights and make knowledgeable decisions, data mining concentrates on finding patterns and relationships in massive data sets. Descriptive, diagnostic, predictive and prescriptive analytics are all included in data analytics, which offers businesses useful information for strategy creation and company management.

Data governance

Data governance refers to the overall management and control of data in an organization, including policies, procedures and standards for data quality, security, and compliance. Data governance procedures are implemented by a business to guarantee the privacy, security and correctness of consumer data.

Data visualization

Data visualization involves creating and presenting visual representations of data to aid understanding, analysis and decision-making. For instance, interactive dashboards and visualizations are created by a marketing team to assess customer involvement and campaign effectiveness. They employ charts, graphs and maps to present data in a visually appealing, easy-to-understand style.

Data architecture

Data architecture refers to the design and organization of data systems, including data models, structures and integration processes. To give customers a uniform perspective of their interactions, a bank might, for instance, have a data architecture that combines customer data from several channels, such as online, mobile and in-person.

Data warehouse

A data warehouse is a centralized repository that stores and organizes large volumes of structured and unstructured data from various sources, providing a consolidated view for analysis and reporting purposes. For instance, a clothing retailer might use a data warehouse to examine customer buying trends and improve inventory control throughout several store locations.

How To Learn?

Data Warehouse fundamentals:

✅ Data Modelling

✅ OLTP vs OLAP

✅ Extract Transform Load (ETL)

✅ Data Ingestion

✅ Schema Types (Snowflake vs Star Schema)

✅ Fact vs Dim Tables

✅ Data Partitioning and Clustering

✅ Data Marts pic.twitter.com/9KwPYVLpUV— Darshil | Data Engineer (@parmardarshil07) March 23, 2023

Data migration

Data migration is moving data from one system or storage environment to another. Data must first be extracted from the source system, then loaded into the destination system after any necessary transformations and cleaning. Data migration may occur when businesses upgrade their software, change to new software programs, or combine data from several sources.

For instance, a business might transfer client information from an outdated customer relationship management (CRM) platform to a new one. To migrate data, it would first need to be extracted from the old system, mapped and changed to meet the new system’s data format, and loaded into the new CRM system. This ensures that all client data is accurately and efficiently transferred to the new system, allowing the business to continue managing customer relationships without interruptions.

Data ethics

Data ethics are the moral principles and rules directing the lawful and moral use of data. Ensuring that people’s privacy, autonomy and rights are protected requires considering the ethical implications of data collection, storage, analysis, and distribution.

Data ethics in the context of data analytics may entail obtaining informed consent from people before collecting their personal information — ensuring that data is anonymized and aggregated to protect individual identities — and using data to benefit society and minimize potential harm or discrimination.

Related: Data protection in AI chatting: Does ChatGPT comply with GDPR standards?

Data lake

The term “data lake” describes a centralized repository that houses enormous amounts of unprocessed, raw data in its original format. Without the need for predefined schemas, it enables the storage and analysis of various forms of data, including structured, semi-structured and unstructured data. Organizations may explore and analyze data in a more flexible, exploratory way because of a data lake’s flexibility and scalability.

For instance, a business might have a data lake where it maintains different types of client data, including transaction histories, interactions on social media and online browsing habits. Instead of transforming and structuring the data upfront, the data lake stores the raw data as it is, allowing data scientists and analysts to access and process it as needed for specific use cases, such as customer segmentation or personalized marketing campaigns.

“Data Warehouse vs Data Lake”

Gain the tools with Magnimind Academy!

In our Live Online Data Science Bootcamps, you’ll build skills, make connections, and learn from data science experts. #data #datascience #datascientist #datawarehouse #datalake pic.twitter.com/00JOW9Tyc0

— Magnimind Academy (@MagnimindA) May 22, 2023

Data augmentation

The process of enhancing or enriching existing data by adding or changing specific traits or features is known as data augmentation. It is frequently employed in machine learning and data analysis to improve the performance and generalization of models, and increase the quantity and diversity of training data.

For instance, in image recognition, data augmentation techniques may entail transforming already-existing photos to produce new versions of the data by rotating, resizing or flipping the images. Then, using this enhanced data set, machine learning models can be trained to recognize objects or patterns more accurately and robustly.

Data engineering

The process of developing, constructing and maintaining the systems and infrastructure necessary for data collection, storage, and processing is known as data engineering. Data intake, transformation, integration, and pipeline building are among the tasks involved. Data engineers utilize various techniques and technologies to ensure effective and trustworthy data flow across diverse systems and platforms.

A data engineer might, for example, be in charge of creating and maintaining a data warehouse architecture and designing Extract, Transform, Load (ETL) procedures to gather data from various sources, format it appropriately, and load it into the data warehouse. To enable seamless data integration and processing, they might also create data pipelines using tools like Apache Spark or Apache Kafka.

Data integration

The process of merging data from various sources into a single view is known as data integration. Building a coherent, comprehensive data set entails combining data from many databases, systems or applications. Several techniques, including batch processing, real-time streaming and virtual integration, can be used to integrate data.

To comprehensively understand consumer behavior and preferences, a business may, for instance, combine customer data from many sources, such as CRM systems, marketing platforms, and online transactions. The utilization of this integrated data set for analytics, reporting and decision-making is thus possible.

Data profiling

Data profiling involves analyzing and understanding data quality, structure and content. It aims to assess the accuracy, completeness, consistency and uniqueness of data attributes. Data profiling techniques include statistical analysis, data profiling tools and exploratory data analysis.

For example, a data analyst may perform data profiling on a data set to identify missing values, outliers or inconsistencies in data patterns. This helps identify data quality issues, enabling data cleansing and remediation efforts to ensure the accuracy of the data for further analysis and decision-making.